Along with social, mobile and cloud, analytics and associated data technologies have emerged as core business disruptors in the digital age. As companies shifted from being data generators to becoming data-driven organizations in 2017, data and analytics became the center of gravity for many companies. In 2018, these technologies must begin to generate value. These are the approaches, roles and concerns that will drive data analytics strategies in the coming year.

Data lakes will need to prove their business value or they won’t last

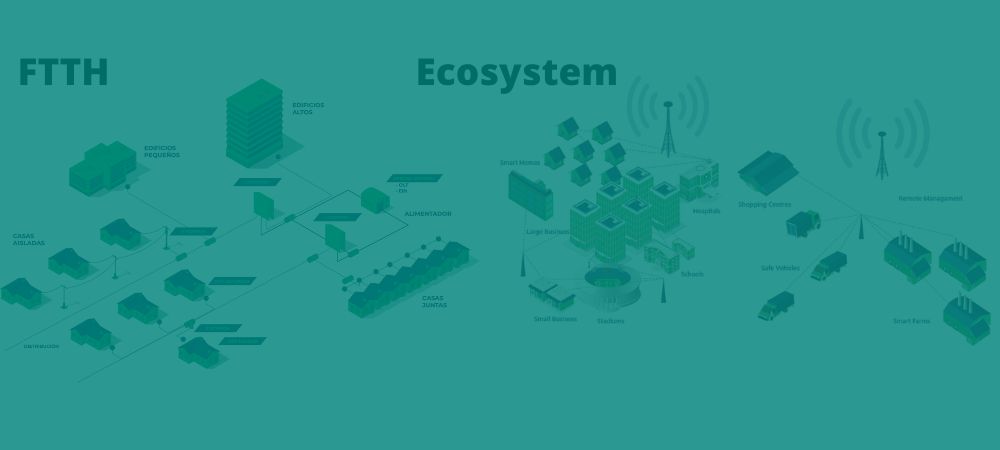

Data has been accumulating in the enterprise at a dizzying pace for years. The Internet of Things (IoT) will only accelerate data creation as data sources move from the web to mobile devices, and then to machines.

“This has created a great need to scale data channels in a cost-effective way,” noted Guy Churchward, CEO of real-time data platform provider DataTorrent.

For many enterprises, driven by technologies such as Apache Hadoop, the answer was to create data lakes-data management platforms to store all of an organization’s data in native formats. Data lakes promised to break down information silos by providing a single data repository that could be used by the entire organization for everything from business analytics to data mining. The lakes of raw, ungoverned data have established themselves as a big data ‘catch-all‘ and ‘cure-all‘.

“The data lake worked fantastically well for enterprises during the era of ‘at rest’ and ‘batch’ data,” Churchward noted. “In 2015, it started to become apparent that this architecture was being overused, but now it has become the Achilles heel for true real-time data analytics. Parking data, and then analyzing it, puts companies at a huge disadvantage. When it comes to getting information and taking action as fast as the calculation allows, companies relying on stale event data creates a total eclipse of visibility, actions and any possible immediate solutions. This is an area where ‘good enough’ will prove strategically fatal.”

Monte Zweben, CEO of Splice Machine, agrees.

“The era of Hadoop disillusionment hits with everything; with many companies drowning in their data lakes, unable to get an ROI due to the complexity of Hadoop-based compute engines,” Zweben predicted for 2018.

To survive 2018, data lakes will have to start proving their business value, added Ken Hoang, vice president of strategy and alliances at data catalog specialist Alation.

“The new data dump – data lakes – has gone through experimental implementations over the past few years, and will begin to close unless they prove they can deliver value,” Hoang said. “The hallmark of a successful data lake will be to have an enterprise catalog that brings together information search and management, along with artificial intelligence to deliver new insights to the business.”

However, Hoang does not believe all is lost for data lakes. He predicted that these and other big data centers may find a new opportunity with what he calls “super hubs,” which can offer “context as a service” through machine learning.

“Big data center implementations over the past 25 years (e.g., data warehouses, master data management, data lakes, Salesforce and ERP) created more data silos that were difficult to understand, relate or share,” Hoang said. “A hub that brings all centers together will provide the ability to link assets within them, enabling context as a service. In turn, it will generate more relevant and powerful predictive information for better and faster operational business outcomes.”

“We will see more and more companies treat computing in terms of data streams, rather than data that is just processed and landed in a database,” Dunning said. “These data flows capture key business events and reflect the structure of the business. A unified data fabric will be the foundation for building these large-scale, flow-based systems.”

Langley Eide, chief strategy officer at self-service data analytics specialist Alteryx, said IT won’t be alone in making data lakes generate value: line-of-business (LOB) analysts and chief digital officers (CDOs) will also have to take on the responsibility this 2018.

“Most analysts have not tapped into the vast amount of unstructured resources such as clickstream data, IoT data, log data, etc., that have flooded their data lakes; largely because it is difficult to do so,” Eide said. “But frankly, analysts are not doing their jobs if they leave this data untouched. It’s widely understood that many data lakes are underperforming assets: people don’t know what’s in there, how to access it, or how to create insights from the data. This reality will change in 2018, as more CDOs and enterprises want better ROI for their data lakes.”

Eide predicted that in 2018 analysts will replace “brute force” tools – such as Excel and SQL – with more programmatic techniques and technologies, such as data cataloging, to discover and derive more value from data.

The CDO will come of age

As part of this new push for better insights from data, Eide also predicted that the CDO role will fully develop in 2018.

“Data is essentially the new oil, and the CDO is starting to be recognized as the lynchpin for addressing one of the most important issues in business today: driving value from data,” Eide said. “Often with a budget of less than $10 million, one of the biggest challenges and opportunities for CDOs is to realize the highly publicized self-service opportunity by bringing corporate data assets closer to line-of-business users. In 2018, CDOs working to strike a balance between a centralized function and integrated LOB capabilities will ultimately get the biggest budgets.”

Rise of the data curator?

Tomer Shiran, CEO and co-founder of analytics startup Dremio, a driving force behind the Apache Arrow open source project, predicted that enterprises will see the need for a new role: the data curator.

Shiran commented that the data curator is found among data consumers (analysts and data scientists who use tools such as Tableau and Python to answer important questions with data), and data engineers (the people who move and transform data between systems using scripting languages, Spark, Hive and MapReduce). To be successful, data curators must understand the meaning of the data, as well as the meaning of the technologies that apply to it.

“The data curator is charged with understanding the types of analyses that need to be performed by different groups within the organization, which data sets are properly equipped for the job, and the steps in the process of taking the data in its natural state and shaping it into the necessary form for the work that a data consumer will perform,” Shiran noted. “The data curator uses systems, such as self-service data platforms, to accelerate the end-to-end process of providing access to essential data sets to data consumers without making infinite copies of the information.”

Data governance strategies will be key issues for all C-level executives.

The European Union’s General Data Protection Regulation (GDPR) will go into effect on May 25, 2018, and is shaping up to raise a specter over the field of analytics, though not all companies are ready.

The GDPR will apply directly in all EU member states, and will radically change how companies must seek consent to collect and process EU citizens’ data, explained Morrison & Foerster’s Global Privacy + Data Security Group attorneys Miriam Wugmeister, co-chair of Global Privacy; Lokke Moerel, European privacy expert; and John Carlin, president of Global Risk and Crisis Management (and former assistant attorney general of the U.S. Department of Justice’s National Security Division of the U.S. Department of Justice).

“Companies that rely on consent for all their processing operations will no longer be able to do so, and will need other legal bases (i.e., contractual necessity and legitimate interest),” they explained. “Companies will have to implement a whole new ecosystem for receiving notifications and consents.”

“When the Y2K boom hit, everyone was preparing for the odds they may or may not face,” noted Scott Gnau, CTO of Hortonworks. “Today, it seems that no one is adequately preparing for when GDPR applies in May 2018. Why not? We are currently in a phase where organizations are not only trying to deal with what’s coming, but are struggling to maintain and address issues that need to be resolved at the time. Many organizations are likely to rely on security chiefs to define the rules, systems, parameters, etc., to help their global systems integrators determine the best course of action. This is not a realistic expectation for an individual person’s role.”

To enforce GDPR correctly, the C-suite is required to be informed, prepared and communicative with all facets of their organization, Gnau said. Organizations will need better management of the overall governance of their data assets. But large breaches, such as the Equifax breach that came to light in 2017, mean they will struggle to balance providing self-service access to data for employees, while also protecting that data from potential threats.

As a result, Gnau predicted that data governance will be a focal point for all organizations in 2018.

“A key objective should be to develop a system that balances data democratization, access, self-service analytics and regulation,” Gnau said. “Going forward, how we design data securely will have an impact on everyone: customers in the U.S. and abroad, the media, their partners and more.”

Zachary Bosin, director of solutions marketing for multi-cloud data management specialist Veritas Technologies, predicted that a U.S. company. will be one of the first to be fined under GDPR.

“Despite the looming deadline, only 31% of companies surveyed by Veritas worldwide believe they are GDPR compliant,” Bosin said. “The penalties for non-compliance are steep, and this regulation will affect each and every company dealing with EU citizens.”

The proliferation of metadata management continues

It’s not just GDPR, certainly. The data deluge continues to grow, and governments around the world are imposing new regulations as a result. Within organizations, teams have more access to data than ever before. All this adds up to the growing importance of data governance, along with data quality, data integration and metadata management.

“Managing metadata and ensuring data privacy for regulations like GDPR joins earlier trends like AI and IoT, but the unexpected trend of 2018 will be the convergence of data management technologies,” said Emily Washington, vice president senior product management software from data and analytics provider Infogix. “Increasingly, companies are evaluating ways to optimize their overall technology stack because they want to leverage big data and analytics to create a better customer experience, achieve business objectives, gain a competitive advantage and ultimately become market leaders.”

Extracting meaningful insights and increasing operational efficiency will require flexible, integrated tools that enable users to quickly ingest, prepare, analyze and control data, Williams says. Metadata management, in particular, will be essential to support data governance, regulatory compliance and data management demands in enterprise data environments.

Predictive analytics helps improve data quality

As projects move into production, data quality is of increasing concern. This is especially true as IoT opens the floodgates even wider. Infogix says that in 2018 organizations will turn to machine learning algorithms to improve data quality anomaly detection. By using historical patterns to predict future data quality results, companies can dynamically detect anomalies that might otherwise have gone unnoticed, or might have been found much later through manual intervention alone.

“As more data is generated through technologies such as IoT, it becomes increasingly difficult to manage and leverage,” Washington commented. “Integrated self-service tools provide a comprehensive view of a company’s data landscape to reach meaningful and timely conclusions. Full transparency into enterprise data assets will be crucial for successful analytics initiatives, addressing data governance and privacy needs, monetizing data assets, and more topics as we move into 2018″.

-Thor Olavsrud, CIO.com – CIOPeru.pe

Source: CIO Mexico